You might have heard about “Big Data” but what I want to focus on today is HUMAN Big Data – the information that is gathered about human attitudes and behaviours – particularly in the on-line world. We can collect data about (almost) everything, but can we make sense of it? How do our attitudes about our attitudes about our attitudes affect how we understand Human Big Data, and how we use it effectively.

I was an engineer from an early age – you know, the usual stuff – taking things apart, loosing bits, and eating too many sweets. However, I’ve always been fascinated by people, and by learning. That lead to a Post-Graduate Certificate in Education, and more recently, a Diploma in Psychology. I spend my time straddling the worlds of people and technology, helping them get the best from each other!

From scarce data to abundant data

We are in the middle of a world-changing shift. When I started working in the business world, all of the data for running the business would fit into a single file in today’s spreadsheet applications. Data was scarce, highly sought after, pored over in intimate detail and highly valued. Today we generate huge amounts of data every single day, and one of the biggest challenges in business is dealing with information-overload. The focus is less on gathering data, and more on curating and making sense of it. Technology to the rescue…

IBM’s “portable” hard drive solution from the late 50’s. You’ve probably seen this photo, or variations of it. That drive is actually less than 5Meg – that’s probably less memory than is in your washing machine (at least if you’ve every washed a USB memory stick by accident it is!). Today though, storage is cheap. By the end of this decade, you will likely be able to affordably store the whole of today’s internet in the palm of your hand. That’s changing how we build computer systems, but also changes how we deal with data.

Unstructured data? Not a problem.

The other big, historical, challenge with data was that we needed to have a good idea of what we were going to collect, before we started collecting it. And if we wanted to change our minds half way through, well, that was going to mean tears before bedtime and a lot of hard work. Today, technologies like NoSQL databases mean that we don’t have to worry about the structure of the data until we want to make sense of it. We can also collect different sorts of data in the same system, capturing more or less as our sources change. Platforms like Twitter and Facebook lead to the development of technologies that can process and analyze huge amounts of data in near-real time – restructuring and refactoring it as we go. It’s a revolution in our relationship with data.

Any Questions?

So what does that all mean? Let’s step back a moment, and think about how we collect data. The traditional tool of choice for research has been the questionnaire. Brand surveys, customer satisfaction surveys, these are familiar weapons of the market researcher. Similarly, focus groups and interviews. But all of these methodologies share a challenge: They ASK people what they think. They are attitudinal self-reports: expensive to gather, and tricky to design.

Liar!

“It’s a basic truth of the human condition, that everybody lies. The only variable is about what……I’ve found that when you want to know the truth about somebody, that someone is probably the last person you should ask.” Dr Gregory House

You have to love the idea of a fictional doctor telling us that we are all liars. Of course we aren’t. But we do bend the truth occasionally. Especially if we think we are being watched (or listened too…).

People will tell you what you what to hear, what they heard and what they would like to hear… …but rarely what they think.

Socially desirable responding is a major challenge with surveys and many other traditional data gathering methods. Certainly, experts can help to minimize their impact, and control for other issues like response set bias. It gets interesting when we turn those biases from issues into data. Technology allows us to record not just the answers, but to record the response times too. This is an altogether different sort of data.

From attitudes to behaviours

We can move from ‘expressed’ measures (attitudes) to observed measures (behaviours). Of course, not all ‘behavioural’ measures are actually measures. Foursquare check ins, for example, are expressions – people don’t (usually) check in at every physical location that they visit. The choice of checking locations is an expression of attitudes about themselves and the location brands. Social media, contrary to opinion, is not about transparency. It is about continual, partial transparency. We need to get smarter about understanding the data that we collect, and learn new techniques to control for the biases in it.

Business social graph (Autodesk)

Of course some data is more ‘objective’ – this is a lovely visualisation of the Autodesk organisation over time. In our early days of working with human data, we spent quite a lot of time building these sorts of visualisation. They have become cheaper and easier to produce, and they are certainly good discussion points. The bigger lesson is that not all data matters, or at least much of the data that we see as important may not actually matter. I can predict more about the interactions of people in an organisation based on the physical distance between their desks, than I can from a hierarchical org chart. Objective information is good, but it is overly valued in business. Aggregate subjective information often tells us more. Not all opinions are meaningless!

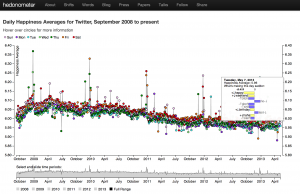

Language Tells

One of the most interesting things about social media is that it gives us more access than ever before to the raw language that people use. As software algorhythms have become more advanced, and our understanding of language has improved, we can create software that can analyze, on aggregate, the emotional content of communications. The hedonometer is a great example – how happy is the Twitter-verse today? But language tells us much more… Shared vocabulary can predict social groupings and influence, in quite unexpected ways.

Looking for patterns can seriously damage your health wealth

Edcrowle – http://www.flickr.com/photos/edcrowle/383301200/sizes/z/in/photostream/

Blindly looking for patterns is a dangerous sport. It hits many of the weak spots in our cognitive systems, and can lead us up all sorts of dark alleys.

Correlation is not causality

Get it printed on a t-shirt. Say it randomly in meetings: “Correlation is not causality.” – ok, it won’t win you many friends, but you’ll be right most of the time. The assumption that unrelated events have causal links almost makes the business world go around. Literally. It is a much harder habit to break that you might think, for reasons I’ll come on to later. When we operate in the world of human data, it is an ever present danger – misunderstanding how variables do (or don’t) relate. Camera tripods have cameras on them. Cameras take good pictures. Cameras don’t like getting wet. Andy here is on a camera tripod. He takes very good pictures. He hates getting wet. Andy is, of course, a photographer, not a camera. But ascertaining that from a few variables (rather than a few megabytes of data and a lifetime of learning) is a very non-trivial problem.

You are biased – Really biased

Perceptual / Attentional biases, observer expectancy effect, anchoring and focusing, conformation bias, availability, cascade effect, bandwagon effect… We have a sea of cognitive biases that play in to one another. We tend to fixate on the first thing that we see, becoming blind to other interpretations, we are biased towards spotting evidence that supports our hypothesis, and ignoring data that doesn’t. We value and believe things based on repetition, more than reliability, and when you put that in a social context, we support what we believe that other people believe. It is a chain of events that leads to big mistakes, and big data is high octane petrol, especially in the business context, where we value ‘objective’ data so highly. Numbers are not always objective, they are vulnerable to subjectivity.

Attention

You will have seen this video on line. Think through the consequences carefully. When told to diligently observe something, we completely miss the gorilla in the room, beating its chest. Apply that to big data. Our perceptual systems keep us safe from predators, and help use locate friends and relatives. They were not specified or tested for analysing terabytes of data on a computer screen…

But you are better than that…

Actually, there’s a name for that bias too: self-enhancement bias. We always over estimate our capabilities. When asked, on average, everyone is above average!

Presentation is bias

“[Big Data] is sometimes seen as a cure-all, as computers were in the 1970s. Chris Anderson…wrote in 2008 that the sheer volume of data would obviate the need for theory, and even the scientific method….

“[T]hese views are badly mistaken. The numbers have no way of speaking for themselves. We speak for them. We imbue them with meaning….

[W]e may construe them in self-serving ways that are detached from their objective reality.”Data-driven predictions can succeed — and they can fail. It is when we deny our role in the process that the odds of failure rise. Before we demand more of our data, we need to demand more of ourselves….Unless we work actively to become aware of the biases we introduce, the returns to additional information may be minimal — or diminishing.” Nate Silver, The Signal and the Noise

There is no such thing as a neutral presentation of data. We always bring something of ourselves to the presentation, even if it is unconscious. Phenomenological approaches to psychological research understand, embrace and control for the biases of the researcher. Ignoring them, or even worse, denying their existence, simply increases their impact. Understand why (at an emotional level) you are measuring what you are measuring, and the story you tell while you present it.

Humans communicate at the level of stories, not at the level of data, so tell stories, and understand stories. Each story is a potentially narrative. Most data has multiple potential narratives. Without a narrative, an embracing context, data is meaningless, or at least meaning less.

Reification

The biggest challenge with Human Big Data is that it breaks the scientific model. Most people working in the data processing world come from a background that draws on the epistemology of natural science. We build a hypothesis, we construct experiments, we measure things. We gradually ‘discover’ the nature of the world around us. Of course human big data doesn’t work that way. Marketing people are paid to CHANGE WHAT PEOPLE BELIEVE. So, if we are measuring what people believe (attitudes) or how they behave (which is related to what they believe – says the marketing world!), we are changing the thing that we are measuring. At a higher level, we are using the learning from big human data to architect the social construct that people operate within. Marketing has never been static. What worked yesterday, won’t always work today. Humans adapt and normalise. If you use behaviour economics in your pricing, eventually you change the behaviours. Why don’t you by the cheapest or the most expensive wine today? By operationalising measurements, we literally ‘call them into being’ – giving them substance and reality – even if they had none in the first place.

Designing out bias

When we gather and present human big data, we have to do our best to design out the biases. But we can turn this all on its head and use our biases, combined with big data, to change attitudes and outcomes. Nudge is common parlance today. Decision architectures generally play on age old cognitive biases. When we add social data we turn on the turbo button; we add social proof. The Facebook like button that you see on websites has faces on it for a reason – instrumenting behaviours and playing back the data is powerful.

The Iron Cage

But we have to be careful. It can be too powerful. Overly rationalizing the world, and using social forces to drive compliance, can lead to an icy and brittle world – to borrow from Max Weber. We need to use these new tools with caution. This is not a single move chess game, and eventually there is a tipping point at which contrarian approaches become the dominant strategy. If we measure emotional responses, and engineer the ultimate film script, and then every film studio follows it, suddenly it becomes bland. We have to apply our learning lightly, and with a bit of fun…

Game mechanics

Probably the fastest way to start a fist fight at a game developers conference is to describe “gamification” as psychology for dummies. Ok. Some parts of that statement might not be true. But what is true is that we can usefully borrow from the tool box of gamification. In gaming, the players enter “the circle” of the game – temporarily adopting a perspective on how the world works. We can escape the game. When we can’t, it stops being a game. There is something else that gamification gives us: The construction of measures. Not everything that we want to measure in human big data has a metric that we can express. In the world of games, we create measures and play them back. Number of lives, energy level… Constructed measures can be used to turn the disadvantages of reification into a positive advantage. We create and combine the things that we can measure, into new measures. Number of twitter followers, number of Facebook likes. These are actually all just constructed measures, which are used to drive behaviours. The players play the game to earn the points they need to level up!

The road ahead

An exciting journey, with some risky pitfalls, is ahead. Our relationship with data has changed irrevocably, as has our relationship with ourselves and society. New thinking is need to understand how we best make use of the data that we collect about ourselves, be it Google Glass, the instrumented-self or a Facebook marketing campaign. We are measuring more and more, and understanding less and less. The technology exists to turn that around; the next generation of billion dollar businesses will be the ones that create and operationalise a deep understanding of human big data…